In a context where delivery cycles are accelerating and web application complexity keeps growing, the question is no longer whether to test, but how to test effectively without slowing the development pace. Automated testing represents one of the most cost-effective practices for technical teams — provided it is approached strategically.

The hidden cost of bugs in production

A bug detected in production costs exponentially more than one caught during development. Industry studies estimate that the cost of fixing a defect in production is 10 to 100 times higher than one identified during the design or coding phase. It’s not just a matter of fix time: it’s the cost of lost trust.

When a user encounters a critical bug — a payment that silently fails, data displayed incorrectly, a form that doesn’t submit — trust in your product erodes. Emergency fixes force developers to abandon their current work, switch context, and deploy under pressure. This reactive cycle consumes considerable resources and generates unnecessary stress.

Manual quality assurance, while essential for certain scenarios, is no longer sufficient for modern web applications. A human tester cannot verify every edge case, every browser combination, every user flow after every deployment. The frequency of production releases — sometimes multiple times per day — makes an exclusively manual approach simply impractical.

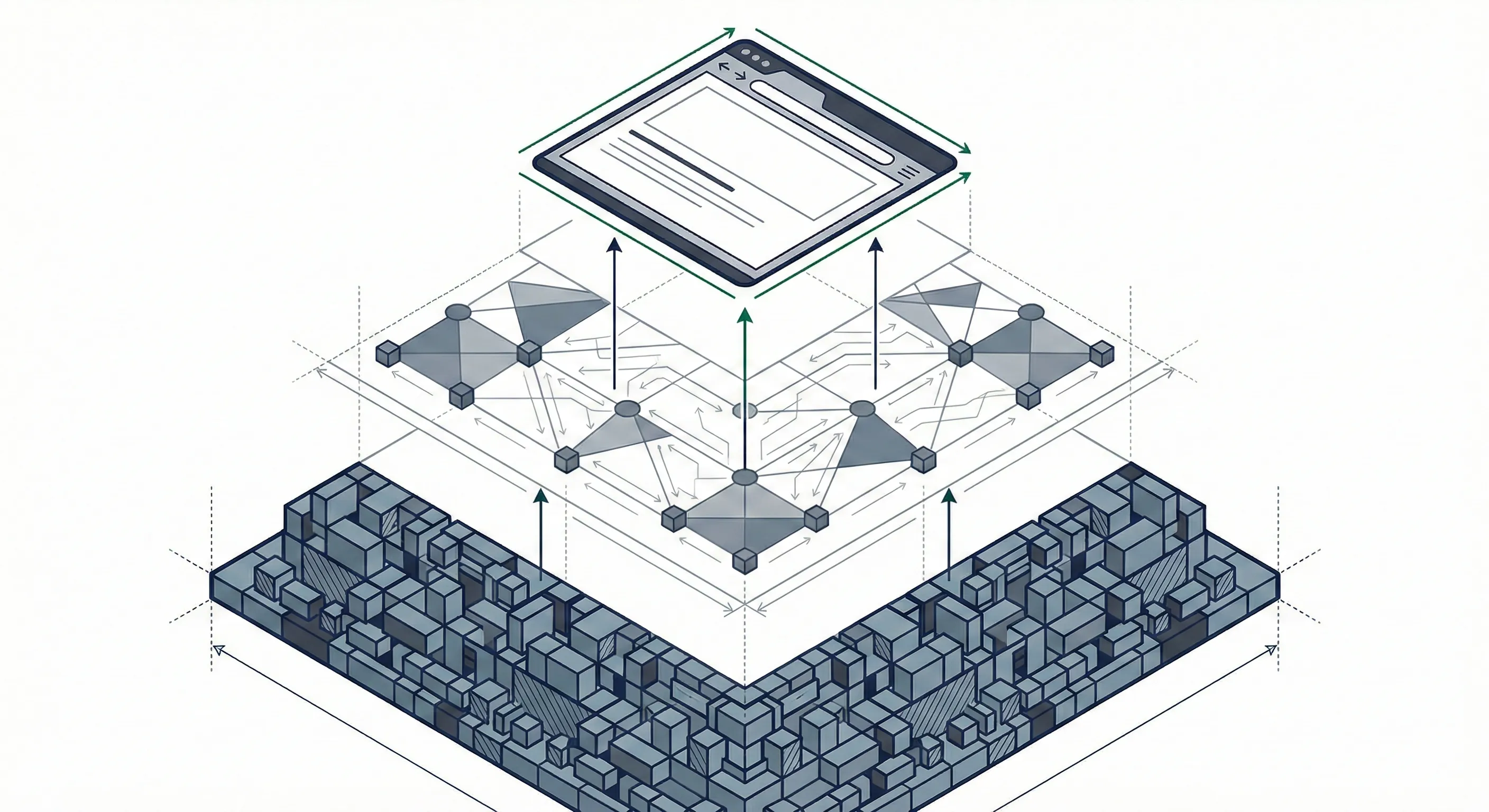

The testing pyramid: a proven framework

The concept of the testing pyramid, popularized by Mike Cohn, provides a strategic framework for structuring your testing efforts. The principle is simple: many unit tests at the base (fast, inexpensive), a moderate number of integration tests in the middle, and a few end-to-end tests at the top (slow, but high confidence).

The goal is to get fast feedback at the base of the pyramid — a developer should know within seconds if their code breaks something — while maintaining a high level of confidence at the top, where real user behavior is simulated.

Unit tests—the foundation

Unit tests verify the behavior of individual functions, business logic modules, and isolated edge cases. They form the foundation of any solid testing strategy. Their strength lies in their execution speed: a suite of several hundred unit tests runs in just a few seconds.

In JavaScript and TypeScript, tools like Jest and Vitest allow you to write and run unit tests with minimal configuration. A good unit test is deterministic, isolated (no external dependencies), and implicitly documents the expected behavior of the code.

- Coverage of pure functions, validations, and data transformations

- Execution in milliseconds — ideal for immediate feedback

- Low maintenance cost when tests are well structured

- High coverage achievable with a reasonable investment

Integration tests — verifying connections

Where unit tests verify individual pieces, integration tests validate that those pieces work correctly together. They test interactions between modules, API contracts between services, database queries, and calls to external services (typically with controlled mocks).

A typical integration test might verify that a REST endpoint returns the correct data after a database insertion, or that a React component correctly displays data received from a service. These tests are slower than unit tests, but they catch a category of bugs that unit tests cannot detect: interface errors between components.

- Validation of contracts between modules and services

- Verification of database queries with test databases

- Use of

mocksfor external dependencies - Detection of cross-layer communication bugs

End-to-end tests — simulating the real user

End-to-end (E2E) tests reproduce real user journeys in a full browser environment. They verify that the entire chain — interface, API, database — works as expected from the end user’s perspective.

Tools like Playwright and Cypress allow you to automate these scenarios with clear syntax and advanced debugging capabilities. You can simulate a complete registration process, a purchase journey, or dashboard management — exactly as a real user would.

However, E2E tests should be used sparingly. They are inherently slower, more fragile in the face of interface changes, and more expensive to maintain. They are reserved for critical flows: registration, authentication, payment, and sensitive form submissions.

Integrating tests into your CI/CD pipeline

Test automation only reaches its full potential when integrated into the continuous integration and deployment (CI/CD) pipeline. Every pull request should automatically trigger the execution of the test suite. The principle is clear: no green light, no merge.

A progressive approach is recommended. Unit tests run first — if a unit test fails, feedback is immediate and the pipeline stops without wasting resources. Then come integration tests, followed by E2E tests. This “fail fast” strategy optimizes feedback time and infrastructure resource usage.

Integration into the CI/CD pipeline transforms testing from a manual chore into an automatic safety net. Every code change is validated before reaching the main branch, which considerably reduces the risk of regressions in production.

Code coverage: a metric, not a goal

Code coverage measures the percentage of lines, branches, or functions executed by your tests. It’s a useful metric, but beware of the temptation to aim for 100%. You can have 100% coverage and still miss critical bugs — for example, if the tests verify that the code runs without verifying that it produces the correct result.

Rather than chasing an absolute number, focus your efforts on the critical paths of your application: the payment process, authentication, sensitive data handling, and financial calculations. Use coverage as a trend indicator — declining coverage over time signals a problem — rather than as a goal in itself.

Pragmatic strategies for growing teams

Building a culture of automated testing doesn’t happen overnight. Here are five proven strategies for teams looking to make realistic progress:

- Start with critical paths — Identify the features whose failure costs the most (payments, registrations, third-party integrations) and test them first. The return on investment is immediate.

- Write a test for every bug fixed — Before fixing a bug, write a test that reproduces it. This test prevents regression and serves as living documentation of the resolved issue.

- Automate regression tests — Each test added accumulates and forms an increasingly robust safety net. Over time, the regression suite covers the most common and risky scenarios.

- Integrate tests into code review — Establish the convention that every pull request must include relevant tests. Code reviews become an opportunity to validate not just the code, but also its test coverage.

- Measure the time saved — Compare the cost of writing and maintaining tests against the cost of avoided incidents, cancelled rollbacks, and debugging time saved. The data speaks for itself.

The return on investment of automated testing

The concrete benefits of automated testing are measurable. Teams with a good test suite deploy more frequently with fewer rollbacks. Developers refactor code with confidence, knowing that tests will catch any regression. Time previously spent debugging production incidents is redirected toward developing new features.

At scale, automated testing creates a virtuous cycle: the more robust the test suite, the more confident the team is to deliver quickly. The faster you deliver, the faster the market feedback. The faster the feedback, the more efficiently the product evolves.

Automated testing is not an added cost — it’s an accelerator. Teams that invest early in a solid testing strategy see a significant reduction in time spent fixing bugs and an increase in their delivery velocity. At Flowstack, we help technical teams implement effective testing strategies, adapted to the reality of their product and their constraints. Because delivering fast is good — delivering fast and well is better.